Exceptional technical episode today with Dr. Trevor Manz on "marimo Pair", an actually!) game-changing pair-programming A.I.-agent companion that lifts heavy loads within your Python data-science notebook.

More on Trevor:

• 27-time NCAA Swimming All-American & National Champion.

• Master's in Computational Biology from University of Cambridge.

• PhD in Bioinformatics from Harvard University.

• Creator of the popular open-source "anywidget" project (amongst many others, particularly in visualizing bioinformatics data, e.g., genomics data).

• Now a founding engineer at marimo.io, where he is leading the charge on marimo Pair.

Seriously, marimo Pair is unreal. A complete reimagining of what's possible in a Jupyter notebook-style environment in the agentic A.I. era. You will hear (and see) my mind explode in this episode!

We also discuss:

• Agent skills.

• Recursive language models.

• A number of other open-source projects, largely in data viz/analysis.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

Web Summit Vancouver 2026

"Collision" has grown and re-branded as "Web Summit Vancouver". I'm looking forward to experiencing the new brand for the first time next week! See you there? Here's where you can catch me:

• Tue May 12 at 11am: Mentor Hours on "scaling your startup"

• Wed May 13 at 1:30pm: Delivering my agentic A.I. talk ("Something Big is Happening") on the "A.I. Summit" stage.

• Wed May 13 at 1:50pm: Emceeing the "A.I. Summit" stage all afternoon.

More on Web Summit Vancouver:

• Taking place May 11–14 at the Vancouver Convention Centre.

• It's the second year in a row the conference, under this new brand, has taken place (the previous "Collision"-branded event was held annually in Toronto and the photo in this post is from a talk I gave there in 2024).

• Connects over 35,000 startup founders, investors and industry leaders to discuss A.I., entrepreneurship and tech trends.

Security for Mythos-Era Agentic Risks, with Rubrik’s Anneka Gupta and Cal Al-Dhubaib

Mythos finds security vulnerabilities at ~100X the rate of publicly available models, and comparable open-weight models are ~6 months away. Scary? Thankfully my guests today, Anneka and Cal, have solutions!

Anneka:

• Chief Product Officer at Rubrik.

• Lecturer in Product Management at Stanford University.

• Climbed the ladder from software engineer to President (!!) during an 11-year tenure at LiveRamp.

• Holds a degree in math and computational sciences from Stanford.

Cal:

• Principal Technologist at Rubrik.

• Formerly founder and CEO of Pandata, which was acquired by Further.

• Highly sought-after keynote speaker.

• Holds a degree in data science from Case Western Reserve University.

This is an exceptional episode with two brilliant, entertaining and highly knowledgeable guests. It can be enjoyed by anyone! In it, they cover:

• How Anthropic's Mythos model can be pointed at a code repository and autonomously surface every vulnerability inside it, and how Anthropic itself estimates Mythos-class capabilities will reach other labs within six to eighteen months, with open-weight versions likely to follow.

• How code-gen models make it easy for attackers by scaling up their capabilities... and by vibe-coders not being aware of vulnerabilities they have!

• How Rubrik's Agent Cloud delivers three pillars of resilience: visibility into every agent in your environment, governance and runtime control through the SAGE small language model, and remediation through Agent Rewind.

• Why the next wave of knowledge work is inherently cross-functional, with A.I. attorneys, security pros, and data scientists all needing shared literacy in A.I. risk.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

In Case You Missed It in April 2026

Whoa, it's May Day... and our podcast-production team was *on the ball* with getting our ICYMI-in-April episode together lickety-split. In case you missed it, these were the best bits of my on-air convos last month:

1. Oracle's Director of A.I. Developer Experience Richmond Alake defines the four types of memory A.I. agents can have... and the biological inspiration for each of them.

2. Matthew J. Glickman, co-founder/CEO of Genesis Computing, describes how A.I. agents allow data engineers to dramatically scale up their impact in an enterprise.

3. The A.I. infrastructure engineer Linda Haviv has amassed a following of over 250,000 folks on social media. In her clip from last month, she combines both worlds — detailing why A.I. infrastructure has now become everyone's problem while also discussing her work in lowering the barrier to access A.I. education.

4. Traci Walker Griffith, principal of The Eliot School in Boston, shares her novel perspective on what critical thinking is... in the context of how fifth-graders are leveraging A.I. to evaluate their work and prepare for tests.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

AI Infrastructure, Ray, and Why Nonlinear Careers Win, with Linda Haviv

For folks in A.I., software, data science, things are moving so fast, it's easy to be overwhelmed. Luckily, A.I. engineer Linda Haviv makes it a joy to stay up to date! Today, we discuss career tips as well as open-source A.I. tech like Ray.

More on Linda:

• Until recently, was Staff Developer Advocate at Anyscale, makers of Ray, an open-source framework for managing, executing and optimizing A.I. compute.

• Previously was A.I. Developer Advocate at Amazon Web Services (AWS).

• Before that, was a software developer at Fox Corporation.

• Was a professional singer in New York up until her second (of three!) children was born.

• Holds a degree in philosophy from Baruch College.

In this episode, Linda ebulliently covers:

• How "A.I. infrastructure" refers to the compute stack, tooling and frameworks purpose-built for A.I. and ML workloads.

• Ray is a Python-native open-source distributed computing framework that lets engineers distribute training, data processing and model serving across GPUs without needing to become distributed systems experts.

• How building in public, creating content and contributing to open source are not just career insurance... they're how you find your community, attract unexpected opportunities and learn faster through teaching.

• And much more!

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

Building Hardware is Hard but AI Agents Help, with Kishore Subramanian

In software, when something goes wrong, you push a patch. In hardware? Oooph. You're dealing with big headaches and huge costs. Thankfully, my guest today — Kishore Subramanian — is using AI to transform the way physical products get built for the better.

Kishore:

• Is CTO of Propel Software, a Bay Area company that combines product data with agentic AI to make the production of physical hardware (including high tech and medtech devices) as seamless as possible.

• Prior to Propel, held senior engineering roles at Google, where he worked on Google Assistant, so he has particularly rich experience with agent development.

• Holds a degree in electronics, computers and process control… as well as a 200-hour yoga-teaching certificate!

In this episode, Kishore covers:

• How product lifecycle management (PLM) is the system that takes a physical product from concept all the way to the customer and beyond.

• How AI agents can review engineering change orders — the hardware equivalent of pull requests — to flag risks, compliance gaps, and downstream impacts before they become expensive problems.

• How Propel built their AI platform, Propel One, on top of Salesforce's Agentforce 360 Platform, which gave them security, governance, data infrastructure, and a reasoning engine out of the box, allowing them to ship in about six months.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

The Four Types of Memory Every AI Agent Needs, with Richmond Alake

To build an effective A.I. agent, getting its memory right is essential. In today's episode, our agent-memory guide is brilliant (and very funny!) machine-learning architect and engineer, Richmond Alake.

More on Richmond:

• Director of A.I. developer experience at Oracle.

• Previously roles include: staff developer advocate for AI/ML at MongoDB, ML architect at Slalom, writer for NVIDIA and computer-vision engineer at Loveshark.

• Holds a master's in ML and robotics from the University of Surrey.

In this episode, Richmond magnificently covers:

• How agent memory is the encapsulation of systems (embedding models, rerankers, databases, and LLMs) that allow AI agents to learn and adapt with new information over time, rather than starting from scratch every session.

• The four types of agent memory (all drawn from human cognition).

• Memory-first agent harnesses.

• Predictions for a flattening of AI engineering roles, where the future developer will need end-to-end understanding of the full agent stack.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

Building AI Agents Where 99.9% Accuracy Isn't Good Enough, with Raju Malhotra

The headlines shout “SaaSpocalypse,” but I don’t buy it. Neither does my guest today, Raju Malhotra, who argues that, thanks to humans collaborating with agents on optimized workflows, the SaaS opportunity is now far bigger than ever before.

More on Raju:

Chief Product & Technology Officer (CPTO) at Certinia, an Austin, Texas-based company whose Professional Services Automation software is used by over 1400 organizations around the world.

Was previously CPTO at PAR Technology and Khoros.

Earlier, spent 12 years at Microsoft working on cornerstone products like Visual Studio .NET.

Holds an MBA from The Wharton School and an undergrad in computer engineering.

In this episode, we cover:

Traditional SaaS isn't dead… instead, it's evolving into a hybrid of SaaS plus agentic capabilities, where humans and agents work together in optimized workflows.

By removing the human-skills constraint from professional services delivery, the agentic revolution could expand the addressable market by 7-8X.

The Agentforce 360 platform (by combining probabilistic AI with deterministic logic and guardrails) empowers innovators to turn their ideas into scalable software businesses, allowing businesses like Certinia to bring AI agents securely and reliably to their customers, even in sensitive industries where 0.1% error rates are unacceptable.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

AI in the Classroom: How a Top Elementary School Is Doing It Right, with Principal Traci Walker Griffith

Long overdue episode today on how A.I. can support children's education. Hard to imagine a better guest than Traci Walker Griffith, principal of a K-8 school that has used innovations like A.I. to become Boston's #1 school.

In this episode, we discuss:

How Traci transformed The Eliot School from an underperforming school on the closure list into the highest-performing school in Boston.

How kids as young as four at the Elliott work with robots and coding tools like Kibo and Scratch Junior, learning that the quality of their input determines the quality of their output ("garbage in, garbage out").

How, for younger students in kindergarten through fourth grade, teachers use A.I. behind the scenes.

How students in grades five through eight interact with A.I. directly, enabling them to build metacognition and critical-thinking skills.

Her concrete guidance for schools (or parents!) considering incorporating A.I. into pedagogy.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

In Case You Missed It in March 2026

It just keeps getting better and better... ICYMI, my on-air conversations with guests in March were extraordinary. Today's episode highlights the best bits from last month, specifically:

Zack Kass (who was head of go-to-market at OpenAI when ChatGPT was launched and who recently wrote bestselling book "The Next RenAIssance") details why classrooms must change in the age of A.I.

Renowned New York University professor KyungHyun Cho explains why A.I. learning to explore the world like humans will unlock major progress in A.I. capability.

Three-time bestselling O'Reilly author Chris Fregly tells us why, if we're still writing code manually in 2026, we're behind the times.

Fireworks AI CEO Lin Qiao explains the difference between artificial general intelligence (AGI) and what she terms "autonomous intelligence".

Acceldata CEO Rohit Choudhary provides a clear vision for how job roles will be transformed by A.I.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

How Data Engineers Are “10x’ing” Themselves With Agents, feat. Matt Glickman

Something big happened in February that changed the world forever. My guest today, Matthew J. Glickman, says code-generating models crossed an event horizon... and there's no turning back. Listen in for the implications.

More on Matt:

Co-founder and CEO of Genesis Computing, a New York-based company building enterprise-ready data agents that automate everything from raw data to production applications, compressing projects that took months into hours while recovering massive hiring costs.

Previously spent over two decades at Goldman Sachs leading analytics and data platform teams, then joined Snowflake as employee 81, where he led Product Management, launched the Snowflake Marketplace, and grew Financial Services into Snowflake’s largest industry vertical.

Holds a degree in Computer Science and Math.

In this episode, which will be fascinating to anyone but especially to hands-on A.I. and data practitioners, we discuss:

How February 2026 marked the moment the latest frontier models crossed a threshold where they could handle complex, multi-step data engineering workflows that previously required human expertise... and there's no going back.

How finance and healthcare were late to adopt the cloud but are among the earliest and most aggressive adopters of A.I.

How Genesis deploys its agentic platform directly inside a client's environment (more like onboarding a new employee than adopting a SaaS product) so that all accumulated knowledge remains the company's asset.

How, rather than acting as a copilot that waits for human instructions step by step, Genesis inverts the model: Agents work autonomously on complex data engineering tasks and only escalate to humans when their confidence is low, memorializing every answer so they never ask the same question twice.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

AI Making Theoretical Physics Breakthroughs

A.I. is now directly advancing science. "SuperChat", a powerful internal OpenAI model, recently helped crack a particle physics problem that had stumped researchers for over a year. Here's what happened:

THE PROBLEM

Four theoretical physicists (from Harvard, the Institute for Advanced Study, Cambridge and Vanderbilt) had been studying interactions involving gluons — the particles that "glue" quarks together inside protons and neutrons, essentially holding all matter together.

For decades, textbooks said a specific type of gluon interaction (called "single-minus" configurations) had a "scattering amplitude" of zero (i.e., these interactions simply could not occur).

The team suspected otherwise, and proved it for small numbers of gluons... but as they tried to generalize the formula, the expressions became dozens of terms long and unworkable. After about a year of grinding away by hand, they were stuck.

THE BREAKTHROUGH

They fed their complicated formulae into GPT-5.2 Pro. The model simplified an expression with 32 variables down to a compact product fitting on a single line.

Asked to generalize for any number of gluons, the model replied within minutes with what it called (I love this!) the "obvious" generalization.

A more powerful internal OpenAI model (which the researchers called "SuperChat") then produced a formal proof after about 12 hours of autonomous reasoning. The physicists checked step by step and confirmed it was correct.

The team then extended the approach to gravitons (hypothetical particles thought to carry the gravitational force), releasing the results in their second arXiv preprint a few weeks later.

CAVEATS

These are preprints, not yet peer-reviewed papers.

The results apply to a very specific mathematical regime at the simplest level of calculation ("tree level").

Human physicists were essential for defining the problem, providing the initial data and verifying the output.

WHY IT MATTERS

As one researcher put it: The hard part is no longer the physics itself; the hard part is now verifying the results and writing them up. AI compressed months of work into weeks.

This may be a template for AI-assisted research more broadly: AI generates conjectures from patterns in the data, human experts verify those conjectures through rigorous math and physical consistency checks.

It's not autonomous AI science; it's augmented human science. And that model could scale across disciplines, from pure math to drug discovery to materials science

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

Agentic Data Management and the Future of Enterprise AI, with Rohit Choudhary

Because of the vast tokens generated by agentic A.I. workflows, my guest today Rohit Choudhary sees enterprise data soon increasing at nearly 10x per YEAR. He's zen though... because he's built the platform to handle it...

More on Rohit:

Founder and CEO of Acceldata, a Bay Area software company that has raised nearly $100m in venture capital to advance data observability and Agentic Data Management for the A.I. era.

Previously, was Director of Engineering at Hortonworks, where he led large-scale distributed systems initiatives across open-source data platforms.

Some of the great topics covered in this episode:

How Rohit coined the term "data observability" in 2018.

Fixing bad data at the point of consumption can be roughly a thousand times more expensive than catching and fixing it as it flows through the pipeline.

For your enterprise data to be AI ready, they need to satisfy multiple dimensions, incl. technical accuracy and business-context compliance.

Enterprise data grow 4-5x year-over-year now, accelerating to nearly 10x soon, driven largely by the explosion of A.I. agents generating queries and activity at a scale that dwarfs human users.

The most valuable developers won't necessarily be the best programmers — they'll be the ones with the clearest thinking, the deepest domain expertise, and the curiosity to articulate precisely what outcomes they need.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

A Post-Transformer Architecture Crushes Sudoku (Transformers Solve ~0%)

A game millions of people solve over morning coffee is exposing a fundamental weakness in the Transformer-based LLMs that dominate A.I. today. Here's why Sudoku matters for the future of A.I.:

THE BENCHMARK

Pathway tested its post-transformer architecture, BDH (Baby Dragon Hatchling 🐲) against "Sudoku Extreme," a collection of ~250,000 of the hardest Sudoku puzzles available.

Leading LLMs (such as o3-mini, DeepSeek-R1, Claude 3.7 Sonnet) scored effectively zero percent.

BDH, in stark contrast, solved them at 97.4% accuracy. That's not a marginal gap... it's a categorical one.

WHY SUDOKU IS A GREAT A.I. TEST

Sudoku is a constraint-satisfaction problem: Every move must satisfy multiple rules simultaneously across rows, columns and boxes. It demands search, tracking and backtracking — well beyond pattern-matching.

This makes it a clean proxy for real-world reasoning in medicine, law, operations, planning and tons of other fields, where you balance competing constraints under uncertainty.

WHY TRANSFORMERS STRUGGLE

LLMs turn every problem into text and solve it by predicting the next token. That works brilliantly for language tasks... but Sudoku doesn't live in language.

A transformer's internal state is constrained to ~1,000 floating-point values per token, and each decision gets locked in as text is generated. It can't hold multiple candidate strategies in parallel or backtrack without verbalizing every step.

WHAT BDH DOES DIFFERENTLY

BDH maintains a much larger internal "latent reasoning space" that isn't forced into text (think of a chess grandmaster playing 20 blindfold games without whispering moves to herself).

It uses sparse positive activations (~5% of neurons firing at any time), far more biologically plausible than the dense activation in transformers.

It's a state-based model (no standard attention mechanism), continuously updating internal state that's inspired by biological neuroscience (called Hebbian learning: "neurons that fire together wire together").

It achieves continual learning: BDH can pick up a new game's rules and reach advanced-beginner level in ~20 minutes, then improve through play... at roughly 10x lower cost than the Transformer-based LLMs achieve their near-zero scores.

CAVEAT

BDH is still early: It has been demonstrated at a ~1 billion parameter scale (comparable to GPT-2), not yet at frontier scale.

BOTTOM LINE

...but the data are clear: 0% vs. 97.4% is not incremental. It suggests the transformer's reasoning ceiling is real and alternative architectures can address it. Exciting to see alternatives to the dominant but limiting Transformer architecture emerge!

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

Attention, World Models and the Future of AI, with Prof. Kyunghyun Cho

What's going to be the next big step function in A.I.? To find out, I saw down with Prof. KyungHyun Cho, who's 200,000 citations put him among the most influential A.I. researchers in the world... and he's a delight to listen to!

In case you aren't already aware of KyungHyun:

Iconic New York University professor of computer science and data science.

Co-directs the Global A.I. Frontier Lab alongside Yann LeCun.

Regularly keynotes at the most prestigious academic A.I. conferences (including being a keynote at NeurIPS 2025).

Was a postdoc under Yoshua Bengio at the Université de Montréal, where they coauthored a paper introducing attention for neural networks, a technique that is ubiquitous within the transformer-based LLMs that enable most A.I. capabilities today.

Lots of other hugely influential papers on deep recurrent neural networks, neural machine translation, visual attention, speech recognition and multivariate time-series modeling.

In today's episode, which will be of particular interest to hands-on A.I. practitioners, KyungHyun eloquently discusses:

The human story behind the invention of attention.

World models.

Why today’s models have already captured most correlations in passive data, making the real challenge about actively choosing which data to collect.

Whether A.I. needs high-fidelity, step-by-step imagination or whether a high-level latent representation that lets it skip ahead is sufficient.

How he's adapting computer-science and A.I. education at the university level now that such capable code-generating agents exist.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

NVIDIA’s Nemotron 3 Super: The Perfect LLM for Multi-Agent Systems

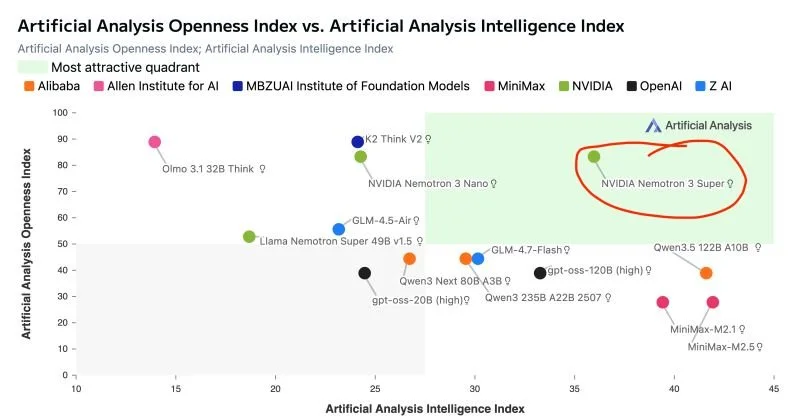

As I've highlighted in this chart, NVIDIA's new "Nemotron 3 Super" LLM stands alone in terms of openness and capability. It's also PERFECT for multi-agent workflows. Read on:

ARCHITECTURE

Nemotron 3 Super has 120 billion parameters, but only 12 billion are active at any time thanks to a Mixture-of-Experts (MoE) design. You get frontier-class knowledge at a fraction of the compute cost.

Combines transformer attention layers with Mamba state-space layers... a hybrid approach that delivers a practical one-million-token context window with efficient, linear-time sequence processing.

A novel LatentMoE technique compresses tokens before routing, allowing 4x as many expert specialists to be consulted per token versus a traditional MoE setup.

Multi-Token Prediction enables up to 3x speedup for structured generation tasks like code and tool calls.

PERFORMANCE

Up to 2.2x higher throughput than comparably-sized GPT-OSS-120B from OpenAI; up to 7.5x higher throughput than Qwen 3.5 (122B) from Alibaba Cloud... while matching or exceeding both on accuracy.

Pre-trained natively in 4-bit NVFP4 precision, pushing inference up to 4x faster on Blackwell GPUs versus FP8 on Hopper, with no accuracy loss.

Currently powers NVIDIA's AI-Q research agent to #1 on both DeepResearch Bench leaderboards.

WHY IT MATTERS FOR AGENTIC AI

Multi-agent workflows generate up to 15x more tokens than standard chat due to resending full histories, tool outputs and intermediate reasoning — the "context explosion" problem. A million-token context window lets agents retain full workflow state without truncation.

Complex agents need to reason at every step, but deploying large models for every subtask is too slow and costly. Nemotron 3 Super's sparse MoE + Mamba efficiency makes step-by-step reasoning affordable at scale.

OPENNESS & ADOPTION

NVIDIA is releasing open weights under a permissive commercial license, plus over 10 trillion tokens of training data, 15 RL training environments and full evaluation recipes.

Already being adopted by Perplexity (search) and CodeRabbit (coding assistant) as well as enterprises like Siemens and Palantir.

AVAILABILITY

Weights are on Hugging Face for self-hosting.

For cloud deployment, available via Google Cloud Vertex AI and Oracle Cloud.

For hassle-free inference, there are several options including Lightning AI (where I hold a fellowship), which offers amongst the fastest inference speeds: a whopping 480 output tokens per second (according to independent benchmarking by Artificial Analysis).

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

Unmetered Intelligence is Heralding the Next Renaissance, with Zack Kass

Today's episode is one of my fave convos ever. Based on his new bestseller, Zack Kass makes a clear case for why cheap abundant intelligence is heralding the next Renaissance — the greatest period for humans yet.

More on Zack:

Was head of go-to-market at OpenAI from 2021 to 2023, including during the initial public launch of ChatGPT.

Advises Fortune 1000 board rooms, including Coca-Cola, Morgan Stanley and Amgen.

His book, "The Next Renaissance: A.I. and the Expansion of Human Potential", went on sale recently and is already a national bestseller. In it, he argues that A.I. will provide the greatest leap in human history.

Today's episode should be of great interest to any listener. In it, we discuss:

How to actively counter tech pessimism.

Ways AI can transform education for the better.

The "intellectual K-curve" that empowers motivated learners.

His four principles for thriving in the age of A.I.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

When Will The AI Bubble Burst? How Bad Will It Be?

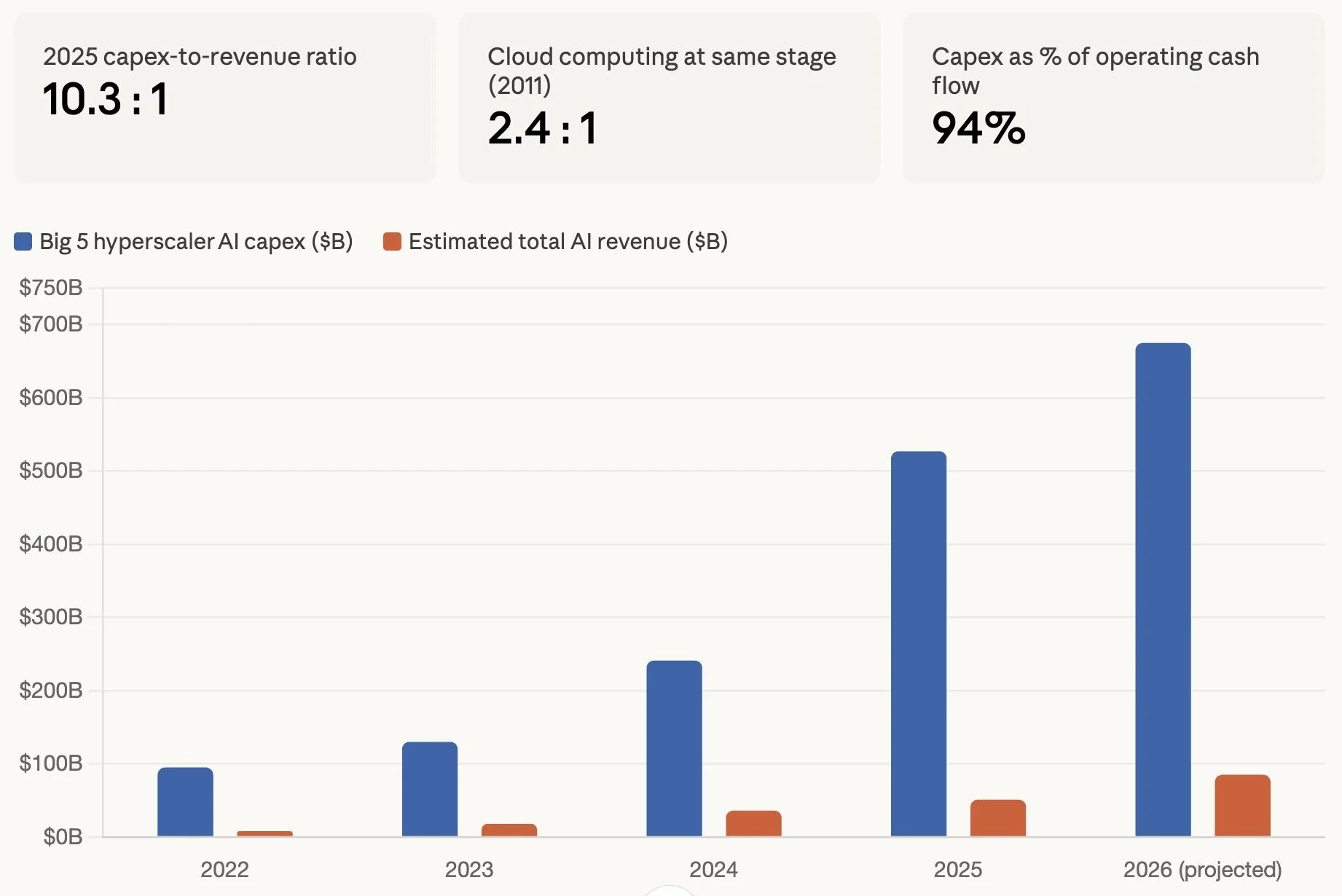

There's mounting evidence that we're in an A.I.-infrastructure investment bubble... but, even if investors lose their shirts, an A.I. bubble bursting would be great news for most of us! How could this be? Read on:

A BUBBLE IS LIKELY HERE

The bull case is that this we're in a boom and the fundamentals will catch up, however various data suggest we're in a bubble.

E.g., OpenAI alone has committed to $600B–$1.4T in infrastructure spending over the coming years — staggering for a company generating ~$13B in annual revenue.

As shown in the chart I made for this post, the five largest hyperscalers had a 10:1 capex-to-revenue ratio in 2025, which dwarfs the 2:1 ratio for cloud-computing investment at the ~same stage.

With capex at 94% of operating cash flow, companies like Google that have famously large cash piles are now issuing bonds.

Circular financing is inflating numbers: NVIDIA pumped $100B into OpenAI so that OpenAI can build data centers full of NVIDIA chips.

WHY BUBBLES AREN'T ALL BAD

Investor Byrne Hobart, CFA argues in his book "Boom" that bubbles have powered many of humanity's greatest breakthroughs — from semiconductors to the Apollo program.

His key insight: participants in a tech race build economic complements to one another. Rising asset prices signal the tech is real, encouraging the investments that make the whole ecosystem work — a self-fulfilling prophecy, but a productive one.

HISTORICAL EVIDENCE

Dot-com era: telecom companies laid 80M+ miles of fiber-optic cable. Most went bankrupt... but bandwidth costs dropped 90%, giving us YouTube, Netflix and cloud computing.

Britain's 1840s railway mania ruined the original investors, yet the network became the backbone of the Industrial Revolution.

Therefore: bubbles leave behind infrastructure the rest of us benefit from for decades.

A WORD ON TIMING

Hobart notes that media called dot-com trading "nutty" back in 1995. Yet at the Nasdaq's post-crash low in 2002, it was still 40% above 1995 levels.

Warning signs of a bubble precede the peak by an unpredictable amount. Acting on them too early can leave you worse off.

WHAT CAN YOU DO?

Diversify your skill set: go deeper into fundamentals (model architecture, optimization, evaluation) rather than relying on wrappers around a single vendor's LLMs.

Build a financial cushion: bubbles create paper wealth and inflated comp packages. Don't let lifestyle inflation consume all of it.

Invest in your network and reputation: When hiring freezes thaw, the people who get picked up first are those other practitioners already know and respect.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

AI Systems Performance Engineering, with Chris Fregly

Chris Fregly spent $6000 at Starbucks writing a 1000-page book Nvidia's own docs couldn't provide. In today's episode, the three-time bestselling author reveals all, updating everything you know about engineering A.I. systems.

More on Chris if you don't know him already:

Long-time A.I. systems performance specialist at Amazon Web Services (AWS), where he, for example, pioneered the design and launch of SageMaker and Bedrock.

Was Chief Product Officer at PipelineAI (acquired by AWS).

Previously held Principal Engineer roles at Databricks and Netflix (earning him an Emmy)!

Was an investor/advisor in xAI (acquired by SpaceX) and Groq (acquired by NVIDIA).

Three-time author of O'Reilly books.

His latest book, "A.I. Systems Performance Engineering" is a thousand-page tome that was published in December and has received rave reviews so far.

Today's episode will appeal primarily to hands-on A.I. practitioners like A.I. engineers, data scientists and software developers. You'll learn a ton about GPUs and getting the best performance from them!

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.

In Case You Missed It in February 2026

Wow, loved the conversations I had with my guests in February! ICYMI, today's episode of my podcast features the best parts of my conversations with guests last month... specifically:

Lightning AI founder and CEO William Falcon on how he converted his wildly successful open-source project PyTorch Lightning into a startup with over $500m in ARR.

Princeton professor of both computer science and psychology, Tom Griffiths, on (based on his latest bestselling book "The Laws of Thought") how we might adapt our understanding of human intelligence to guide designs for AI systems.

Antje Barth, a Member of Technical Staff within Amazon’s prestigious "AGI Labs", fills us in on what their latest product, Nova Act, can do for AI developers.

Praveen Murugesan, the VP of Engineering at Samsara (a publicly-listed IoT company), fills us in on how quantum physics might be the catalyst for creating AI agents that can operate free from human intervention.

The SuperDataScience podcast is available on all major podcasting platforms, YouTube, and at SuperDataScience.com.